As I mention in my “about me” section, I have been reading the climate change literature since the early 1990’s. In doing so I have developed my personal views on the topic that are loosely defined as those of a “lukewarmer”. It has been pointed out to me that my definition may miss a large community of lukewarmers but that is the source material for a whole new post. Instead, having worked both in the academic sector and the private sector (for both communities so to speak) I would like to write today about the divide between the skeptical community (mostly made up of engineers and non-academic scientists) and the warmists (primarily made up of academic scientists). I am deliberately not addressing activists for either side as their motivations are not cogent to this discussion. In this post I will consider whether cultural tendencies associated with efforts to avoid Type I versus Type II errors might be a consideration in this inability to communicate effectively.

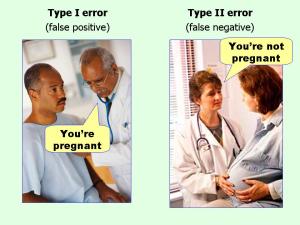

For those of you who are not familiar with the language, science identifies two major potential types of errors (okay there are at least three types but I will not go that deep today). A Type I error, which represents a false positive, involves claiming that an observed hypothesis is correct, when in reality it is false. A Type II error, which represents a false negative, involves claiming that an observed hypothesis is incorrect when it is actually correct. In my opinion, the best visualization of the difference between the two is this graphic: (which I have seen in numerous locations and whose origin I have been unable to confirm although I believe it comes from the “Effect Size FAQ“).

In the academic community a Type I error is a very big deal. Making an incorrect claim is the sort of thing that gets academics in very hot water. A Type I error leads to retractions of academic papers and a significant loss of prestige. As a consequence, the academic community has developed an extremely detailed process to avoid Type I errors involving confidence limits, acceptable p-values, peer review etc.. In the academic community a Type II error is by far less an important concern. Since science is theoretically a collegial affair (many colleagues are working on similar problems at the same time) all a Type II error represents is that you didn’t identify the effect “this time” which means you still have another shot tomorrow, no mess no worry.

In the private sector, it is almost completely reversed. Consider my personal area of expertise “contaminated sites”. If I am leading an investigation at a contaminated site and make a Type I error, all that means is that I have inferred the presence of contamination where it doesn’t actually exist. The outcome of this error involves the need for further investigation to characterize and/or delineate the contamination. The further work will either confirm the contamination exists or provide further data to rule it out. This may entail some additional cost, and if wrong some minor loss of face, but it is not the sort of thing that gets one fired (if it is an isolated event). A false negative, on the other hand, is a much more serious issue as private sector scientists typically work in smaller groups with less cooperation within the wider community on individual project (there is limited oversight due to the the limited scopes of the problems and issues around sharing of proprietary information). Thus errors are less likely to be caught before the consequences become critical.

Imagine telling a property owner that a property is clean when it isn’t. The owner, acting on your advice, then sells the property to be developed for another use. If, in the course of the development, the contamination is found then the development stops. The new owner will need to clean the contamination and the costs associated with the clean-up and delays in the development (mortgage cost, permitting, subcontractor costs, etc..) will get passed on to your client. Lawsuits almost always ensue and someone (usually the consultant who missed the contamination) is going to pay a heavy cost. An even worse scenario happens if the contamination is not uncovered until after the development has been built because then human and ecological health could be put at risk. The term “Love Canal“, or some similar local example, is burned in the memory of every environmental consultant early in their careers to avoid any repeats. The only effective ways to avoid Type II errors consists of either increasing your sampling data density or developing additional “process knowledge”. Process knowledge in this case is an understanding of the sources of the contamination and the processes that govern the movement of the contaminants in the subsurface. Without detailed process knowledge (or a good conceptual site plan) an effective investigation plan cannot be accomplished.

So why is this difference important? Well as discussed, the vast majority of the warmist community have a worldview that stresses Type I error avoidance while most skeptics work in a community that stresses Type II error avoidance. Skeptics look at the global climate models and note that the models have a real difficulty in making accurate predictions. To explain, global climate models are complex computer programs filled with calculations based on science’s best understanding of climate processes (geochemistry, global circulation patterns etc) with best guesses used to address holes in the knowledge base. The models are “trained” by looking at historical data and seeing whether they can replicate what has occurred in the past. In a simplistic description they are trained to interpolate data and once they get good enough at interpolating data they are then used to extrapolate future conditions. Since the global climate models are works in progress they still do not do a great job at extrapolating, yet. In particular, these models have failed to predict the “pause” in the surface temperature data that has lasted for (depending with whom you talk) somewhere around 15 – 18 years. Essentially the model predictions and the measured temperatures have diverged.

Skeptics see the poor extrapolations and suggest a need to refine the models to address the divergence. From a Type II error avoidance viewpoint, given the relatively poor quality of the model predictions, putting limited resources into addressing potentially faulty predictions seems like a poor choice. Instead, resources should be allocated to improving the models and any additional monies spent on other “demonstrably real” problems out in the world. Warmists, on the other hand, point out that the models are the best tools we have to date and to ignore their predictions, just because they are imperfect, is a big mistake. Warmists point out there is a real risk that a lack of action now could result in a low-probability, high-cost outcome (a fat tail on the uncertainty distribution of the outcomes). So monies should be spent on avoidance immediately while we continue to refine the models.

So where are we today? In 1990’s parlance, skeptics are from Mars and warmists are from Venus. Like in the book, the two sides are not speaking to each other but rather are speaking past each other. Until the two communities can learn to speak each other’s language and acknowledge the underlying differences, but ultimate validity, in both world views, we are not going to advance the discussion. The frustrating part, from someone who has worked on both sides of this intellectual chasm, is that neither side is “wrong”. Each lives in a world where risk avoidance decisions are made and feel their approach is “right”. We need to develop a “consensus” that acknowledges that both sides have legitimate concerns and that any acceptable compromise has to recognize the validity of differing points of view.

Part of the problem is that the warmist group has embraced the “precautionary principle” which is almost impossible to deflect. While it is okay to say “How can we not make every effort to avoid a warming world,” unless there is a realistic measurement of negatives and positives to any temperature change measured against what the resources could accomplish if used differently there can be no logical discussion.

Both “global warming” and “climate change” are automatically assumed to be detrimental, and the earth's average temperature over a 30 year stretch from 1960 – 1990 (or whatever the warmists are pointing to) has been proclaimed to be human optimum. This kind of reasoning needs to be questioned.

LikeLiked by 1 person

As a trained engineer with a later degree in History and Philosophy of science, I appreciate the intention of this post. I helped organise a conference in Lisbon to get the two sides to talk, and listen, to each other. The arch warmist Gavin Schmidt refused to turn up, telling us that:

“The fundamental conflict is of what (if anything) we should do about greenhouse gas emissions (and other assorted pollutants), not what the weather was like 1000 years ago. Your proposed restriction against policy discussion removes the whole point. None of the seemingly important ‘conflicts’ that are *perceived* in the science are ‘conflicts’ in any real sense within the scientific community, rather they are proxy arguments for political positions.”

Full response here:

http://tallbloke.wordpress.com/2011/02/04/gavin-schmidt-response-to-lisbon-invitation/

In other words, Gavin was saying that the science was settled so far as the academic scientists were concerned. Apart from Dick Lindzen. Oh, and Roy Spencer, and ah yes, John Christy… oh, and many other other academic scientists Gavin conveniently defined as politically motivated outsiders.

LikeLiked by 1 person

Interesting how you and Oreskes start off in the same direction and then turn 180 degrees away from each other. http://www.nytimes.com/2015/01/04/opinion/sunday/playing-dumb-on-climate-change.html

But I think her argument (that the scientific community is understating rather than overstating risk) follows better than yours: “When applied to evaluating environmental hazards, the fear of gullibility can lead us to understate threats. It places the burden of proof on the victim rather than, for example, on the manufacturer of a harmful product. The consequence is that we may fail to protect people who are really getting hurt.”

It is exactly as you describe – the engineer is terrified of putting people at risk, while the scientist is trained to make only the most solidly defensible statements. Systematic understatement of perceived climate risk is the result.

I think I should also suggest that you do not appear to fully understand what a climate model is as far as I can tell. It is better described as a simulation than an extrapolation. Further you do not understand the role of climate models within climate science. All of this is understandable – these are widespread misapprehensions, especially common among the polyannists and obfuscators. Further, explaining what is really going on within the discipline is subtle. Some practitioners are better at extracting insight from models than others. But the concern is rooted neither in extrapolation from the observations nor in model output. The concern is rooted in fundamental physics. That's why the Charney report basically got it right in 1979, before paleoclimate was well-established and before the bulk of the warming started. These two more sophisticated endeavors both broadly support the theory as established by Charney.

The idea that the observed “hiatus” outcome is drastically distinct from what was expected is not entirely false but is consistently and grossly overstated by those who are reluctant to face the real scope of the risk as presented by a fair assessment of the evidence.

LikeLike

I would argue the reason we go in different directions is that Dr. Oreskes (and to a lesser extent you) appears to misunderstand what a Type II error represents. Type I and Type II errors don’t involve overstating or understating risk they involve identifying, not identifying or misidentifying signals in a world of noise.

The best description of the Oreskes argument I have read to date, was presented by Suzy Waldman a PHD student in risk communication who said that what Dr. Orekes is saying is “like asking weather forecaster to look at effects of a bad rain & then to predict rain the next day with more certainty.”

You further indicate that I “do not fully understand what a climate model is” and I would argue that you are mistaken. During my graduate studies I had the opportunity to look under the hood of one of the first generation models and so while I clearly am not in your class I still can recognize that models represent complex algorithms. The outputs from the models represent mathematics interpreted through the lens of the models’ creators. You indicate that some modellers are better than others at extracting that information and that may be true but from a Type II error avoidance perspective the models remain imperfect and any decision based on those models must be viewed in the light of that imperfection.

As I have noted elsewhere, however, in many branches of science we are forced to rely on imperfect models because perfection will not be achieved in time for decisions based on the models to be relevant. What needs to be acknowledged is there are legitimate alternative views of how to proceed in a world where certainty is not possible. Those views are coloured by differing experiences, differing priorities and differing weighting of risks. Modellers form an important voice but cannot be the only voice in this chorus.

LikeLike

Waldman is impressive sometimes but I don't see it in this case, at least not in your paraphrase. I wish Oreskes had not used the Type I/Type II distinction at all – this is a naysayer formulation as I try to explain here: https://medium.com/@mtobis/co2-on-trial-41d6534e8ff6

But notwithstanding her acceptance of this misleading formulation, Oreskes' point is simple enough – the career objective of the scientist is to say things that are compellingly true, NOT to speculate about things that are not excluded by the evidence, which in risk management situations are really the important things.

The way I would put it is that climatology is culturally a pure science, not an applied science. This fits in with what you are saying and with what Oreskes is saying.

The risk spectrum includes some outcomes that are as yet unforeseen. The recent increase in meridional jet stream patterns, for instance, was not foreseen, and insofar as I know not simulated by the CMIP models. A posteriori physical arguments attributing them to the change in climate forcing (e.g. those of J. Francis) are not extremely compelling. But as the increase becomes more apparently a real shift in climate, and as human forcing increasingly dominates climate change, it's hard to make a case that these events are NOT somehow a consequence of anthropogenic disruption.

The fact is that we didn't see these events coming. Consequently they do NOT validate the models for the purpose at hand. They do NOT validate climate science. But they DO broaden the risk spectrum. They do constitute evidence of risks that did not appear in older formulations. They do imply that past risk formulations were an underestimate.

From the perspective of a decade ago, this increase in so-called “blocking events” (I think the name is misleading) would be unknown unknowns, sheer speculation. And scientists do not speculate in formal communications. IPCC does not speculate.

On the other hand, over beer in unguarded moments people informed about the climate system DO talk about how difficult it is to know how shifting climate will manifest in synoptic weather, and how unprecedented patterns may appear which carry unquantified risk. This gets filtered out by the IPCC process and appears nowhere in policy discourse.

I don't know as the type I / type II distinction is really all that informative. But the more people know about the system, the more nervous they are.

As for modelers not forming the most important voice, absolutely I agree, but I claim that is a strawman. All practicing climatologists use models or at least refer to model output, but the process is not one of building models and then reading the output. The practice is far more complex of a collaboration between various modes of reasoning than that implies.

LikeLike

Funny thing is I agree with almost everything you say in your comment about the state of the science but both it (and the linked piece) reinforce my confidence in my hypothesis.

This is not about sales (or trials) but that highly educated people inside and outside of academia can have different, and equally legitimate, ways of looking at the world and making decisions. The debate is fueled by scientists like those I work with every day. They are risk-averse but do not immediately share your risk-perceptions. Your community does not speak to them in their language, but instead lectures them like they were first-year undergrads and berates them about why their world-view is incorrect. Some very high-profile members of your community feel free to belittle highly-competent, highly-educated individuals and call them “stooges” for the fossil fuel interests. Were it cultural, the responses would be called prejudiced or bigoted but because it is scientific it is just seen as the current state of affairs. It is indeed possible to hold points of view that differ with those of your community without being in the pay or pocket of some special interest.

What I find most disturbing is that members of your community seem very comfortable stepping out of their areas of expertise and lecturing us on how to reduce fossil fuel dependence. I have written quite a bit on the strengths and limitations of various renewable energy technologies and while I may not know as much about modeling as you, I can likely drive circles around most of your peers in methods to reduce fossil fuel dependence and renewable energy alternatives. If you don’t respect our expertise, it is unlikely that we will reciprocate. Moreover every time one of your peers spouts off on topics about which they are clearly uninformed (like renewable energy technologies) it causes us to doubt them in their actual area of expertise.

LikeLike

I think you have got things the wrong way round with Type I and II errors wrt climate warmists and sceptics. Here is a comment which I have just posted on Bishop Hill (http://www.bishop-hill.net/blog/2015/1/5/sceptics-are-from-mars-and-warmists-are-from-venus.html) where I first came across your post:

In teaching industrial statistics to engineers and others, I found the fire alarm analogy a useful one for getting across the general idea of Type I and Type II errors.

Suppose you have a fire alarm, perhaps one which responds to ions in the air, which you can adjust to every setting between 'always screeching' to 'always silent'. In between, the sensitivity varies from very high to very low. We shall assume that this is your one and only basis of concluding whether or not a fire is present.

If you leave the alarm 'silent', you will never make the Type I error of wrongly concluding that a fire is present by the simple expedient of never, ever, concluding that a fire is present. You are avoiding the error of 'delusion': the error of declaring that there is a fire when in fact there is none.

If you leave the alarm on permanent screech, you will never make the Type II error which is wrongly concluding that there is no fire. You do this simply by declaring fire is always there. You are avoiding the error of 'oversight': the error of failing to spot a fire when in fact it is there.

In statistical analyses, the risk of a Type I error is usually denoted by the Greek letter alpha, and the risk of a Type II error by beta. So, you set alpha to zero if you leave the alarm set to permanent silence. You set beta to zero by having the alarm set to permanent screech.

In between, you can make either type of error. Type I: you shout 'fire!' when there is none. Type II: you fail to shout 'fire!' when there is one. When there are losses to be expected from both types of error, we will generally want to select some intermediate setting on our alarm. For example, having your building permanently evacuated because the fire alarm never stops is a loss, and having it burn down because your fire alarm never goes off is a loss.

Now in my view, alarmists like Ehrlich, the late Schneider, Hansen, and a host of secondary ones, have their sensitivities way too high, and they make an awful nuisance of themselves by their screeching. Great loss to society has resulted from follies such as windfarms and bio-fuels, and less tangibly from the shocks and scares imposed on children and other vulnerable people being told, often very vividly, that their lives are in great danger. More sensible people, in my view of things, are not so willing to, so to speak, shout 'fire!' because they can see the risk of serious harm if it is a false alarm.

The so-called sceptics, in other words, act as if they wish to avoid the Type I error: which in our simplistic analogy, is the error of raising a false alarm. Alarmists on the other hand act as if they wish to avoid the Type II error, which in our analogy is the error of failing to raise the alarm when in fact there is a fire. The author of the piece quoted in the above post has got this back to front.

LikeLike

No: “Making an incorrect claim THAT GETS OFFICIALLY RECOGNIZED is the sort of thing that gets academics in very hot water.”

There's no real down side to making an incorrect claim that doesn't get noticed, or does get recognized but it's inconvenient to take action.

This, in my opinion, is the real reason for the divide between establishment scientists and sceptics. Nobody likes a smart-ass saying things like: remember how you said the polar ice would melt last year? Or London would be flooded? Or there would be more hurricanes not less? Or, or, or…

It's much easier for establishment scientists to ignore them, rather than attacking their own colleagues.

LikeLike

I am uncertain whom I am being lumped in with. I certainly agree that there are problems in communicating between climate science and other informed sectors of the population. Strongly so. This tendency to oversimplify and over polarize has historical roots. It's partly the result of being confronted by endless pseudo-debate, and partly a consequence of listening to bad advice from “science communication experts”. I try to avoid those pitfalls.

If you want to engage with me, I'd appreciate if you would stop lumping me in with others. It is, ironically, a form of the same mistake you are criticizing.

LikeLike

and for that I apologize. You are right, I do not know you well enough to make generalizations of this sort and this is entirely consistent with the bad habit in the field (which I have done in this case) of lumping people into teams rather than acknowledging that we are all individuals with our own personal views and approaches. Essentially, I have fallen into the same trap I am trying to address. I can see how it can become very easy to do as I have been attacked (in a relatively mild manner when compared to what I have seen in the wilds of this debate) and must continue to remember that being challenged for one's intellectual position is a very different thing than being challenged as a person. I am preparing a follow-up post to address some perceived (by me) misunderstandings of my initial hypothesis and would continue to appreciate your well-thought comments and the fruits of your knowledge and experience.

LikeLike

Um, Michael you make quite clear who you lump yourself with when you talk about 'naysayers'; that's part of the oversimplification and polarising.

The propensity for different communities to weight different false alarms is intriguing and an interesting blog post subject, but from the tone of the 'pseudo-debate' as Michael puts it, I suspect it is swamped by social and community bonds.

A claim that the 'scientist is trained to make only the most solidly defensible statements' is only relevant if scientists *in this field* have a demonstrated history of actually only making them, and similarly with respect to 'their career objective is to say things that are compellingly true, not to speculate'.

These assertions – that academic scientists are objective and therefore we can believe them – are comfortable and compelling, particularly to those in the field who have invested personal reputations in their conclusions: we are right because we are scientists and scientists are scientific.

This is not particularly new or interesting or controversial, but once a Type I error has been made and widely accepted in a research community it can be very hard to reset (ulcers and tectonic plates come to mind). Science-the-human-activity is very Kuhn, even if Science-the-ideal is Popper.

LikeLike

If we can distinguish between deniers and skeptics perhaps it might be a good idea to distinguish between alarmists and scientists.

Ehrlich is not part of the crowd I wish to defend. Schneider and Hansen, however, know (or knew) their stuff.

A real skeptic knows what to do when the evidence for something becomes sufficiently compelling.

For skeptical but informed scientists, that this threshhold was been crossed about 20 years ago. The porblem is what to do when the evidence becomes substantially more alarming than the policy sector is willing to credit. I'm not sure there is a good precedent for that. We see all sorts of responses to this messy situation.

Oreskes has argued that the IPCC process errs on the side of complacency. This is not really news to those of us steeped in the matter, but it's something to take into account. A number of other steps in the chain between IPCC and policy do so as well. Accordingly, informal statements about how serious the matter is tend to be out of synch with the formal output.

Y'all are trying to explain this as originating in personality flaws among the scientists as opposed to a systematic failure of the formal process. I submit that while there is something awry you are explaining away the wrong thing. It reminds me of this:

http://www.theonion.com/video/nations-climatologists-exhibiting-strange-behavior,21009/

LikeLike

I am definitely part of the community that is being criticized, not just a sympathizer.

I am bending over backwards to not say the D-word as I know that kills conversation. But I am among those who are reluctant to categorize the opposition as “skeptics”; a true skeptic applies skepticism to his own position first. I see very little of that among those who think what climate science says should be discounted.

That said, I see the validity of your argument in principle. I discount many of the arguments of economists for very similar reasons. You have to look at the output of the field in detail, not filtered through the press, to make this sort of judgment. It's not easy.

The first consensus document of physical climatology was the Charney Report, ca. 1979. Very little of it has been called into doubt by subsequent analysis or observation. Maybe you should start there,

http://web.atmos.ucla.edu/~brianpm/download/charney_report.pdf

LikeLike

Sorry for bad editing. You take my point.

LikeLike

Michael,

Are you suggesting that in the past 20 years there has been no new evidence that might have altered the opinion of an informed skeptical scientist?

LikeLike

The rather sedate trend in global mean temperature does change the picture a bit – it argues against the higher short term sensitivities. But it's nowhere near as big a deal as some people would have it. The increase in very odd meteorological seasons, though, would tend to indicate that the temperature sensitivity is not the only thing we have to worry about.

In terms of evidence that ending net carbon emissions is necessary, 20 years of moving in the wrong direction has certainly made matters more urgent rather than less.

I find it very difficult to imagine how an informed competent professional (on climate physics or paleoclimate) could think otherwise. Except for a handful of people who were already outspoken against carbon policy 20 years ago, I can't think of an informed competent professional physical climatologist who doesn't think the urgency of the problem has increased considerably.

LikeLike

Blair,

I think that you have blogged around the elephant in the room. There are vast cultural differences between academic scientists and industrial scientists that affect their biases in interpreting and presenting information. You are describing the biases and not their root causes.

The ivory tower is very real and imparts an arrogant disregard for contrary opinions and to some extent facts. This arrogance excuses very bad behavior amongst peers and allows for the denigration of individuals who disagree and the exclusion of their work.

LikeLike

Your ability to dismiss information in such an off handed manner is very revealing. 20 years ago when global temperatures had been increasing for 20 years, the short term trend was THE metric for alarming people about the greenhouse effect. Now it is convenient for you to ignore the short term. Interesting.

20 years ago it wasn't the increasing CO2 that threatened catastrophic results but the positive feedbacks resulting from the small incremental impacts of the CO2.

An informed skeptical person might think that the 20 years since then could have suggested a mitigation of these fears, not an amplification. Strange.

LikeLike

+1

Money down Tobis will not understand your comment at all, Jeff. Warmies just don’t get why the rest of us aren’t alarmed.

LikeLike

How about the economics? Projections of insupportable costs and consequences of warming depend, amongst other things, on a flat-line extrapolation of “real dollars” — a miniscule discount rate. But a standard 3-5% rate of ROI and dollar value inflation results in a ~>50:1 disparity in incurring “mitigation” costs now vs. “adaptation” costs later. And the latter IIF drastic warming occurs!!

And the benefits of warming, easily visible historically and currently, are arbitrarily set at ~0. Prestidigitation, indeed.

LikeLike

Pingback: Does the Climate Change Debate Come Down to Trust Me versus Show Me? – Further thoughts on Error Avoidance | A Chemist in Langley

Pingback: On a Broader Definition of a “Lukewarmer” | A Chemist in Langley

Pingback: More on "Professionalism" in the Climate Change debate | A Chemist in Langley